Key Points:

- Metasurfaces are well suited to implement array-based imaging systems in which a tiled sequence of multiple optical apertures captures distinct information from a scene in a single snapshot and is then paired with computational postprocessing to provide task-relevant information

- Key applications include 3-D imaging, hyperspectral imaging, polarization-resolved imaging, and information processing (e.g., using meta-optics that implement convolution operations)

- While array systems are promising, there are a number of practical challenges related to limited sensor real estate, the need to customize sensors to maximize performance, and associated impacts on image resolution and pixels per degree for imaging systems when balanced with form factor and power budget constraints

Introduction

While most cameras today are monocular, there are a number of imaging systems and more complex camera architectures that exploit two or more apertures in order to capture additional information from a scene that is difficult to accomplish with a single aperture. One example of this is stereo cameras, that exploit the parallax from two independent views of a scene with separate cameras to provide three-dimensional information. Lightfield cameras are another example in which arrays of multiple lenses help to convey additional three-dimensional information from a scene. There are cases where information other than depth is captured, such as snapshot-based hyperspectral cameras in which many lenses are positioned adjacent to one another above a single sensor but with distinct color filters for each lens, enabling color information beyond those of standard RGB sensors. Underlying all of these cases is that the ability to capture a separate image with a slightly different configuration (i.e., a spatial offset, different color filter, a different lens design with a modified point spread function, etc.) helps to capture additional information that in combination with postprocessing software can yield more complex data that benefit the user’s application.

Metasurfaces are particularly well suited to these types of array-based systems. While refractive arrays do exist, processes to build these can vary and depending on the lens specifications, they can suffer from poor alignment tolerances and limited lens speeds. Fabrication of a metasurface-based array is not any more complicated than fabrication of a single metasurface, as one must only extend the design pattern in the lithography stage of the nanofabrication process—in many cases, if the lens design is unmodified across the array, the exact same pattern can be duplicated. Furthermore, in being built on a flat substrate, this can also facilitate integration with the sensor via inclusion of standoffs or spacer beads between the sensor and substrate. In this article, we’ll briefly describe a few key use cases and design considerations for metasurface-based imaging arrays.

Varied Point Spread Functions

One use case for a metasurface array is acquiring additional information from a scene through modifying the point spread function of each lens in an array. A given point spread function characterizes the optical response of a lens and the associated intensity distribution has implications for the different types of information that the lens can capture. When viewed in the Fourier domain, the point spread function is known as the optical transfer function (OTF), the magnitude of which is the modulation transfer function (MTF). The MTF is essentially a measure of how efficiently spatial frequency information from an object is transferred to an image.

Depending on the shape and relative intensity distribution of a PSF, the MTF will change. For example, a PSF that is very narrow in the horizontal direction would provide an MTF with a high cutoff frequency along that axis (i.e., it would enable you to image finely-spaced horizontal bars); however, if the same PSF were elongated in the vertical axis, then it would correspond to an MTF with a low cutoff frequency in the vertical direction and would only poorly resolve vertically spaced lines in an object pattern.

For an array system, this presents an interesting design opportunity. Rather than requiring a single lens to achieve a diffraction-limited MTF over the entire input parameter space (e.g., over the full field of view, operating bandwidth, and different polarizations), individual lenses could be dedicated to a subset of that parameter space, with their PSFs optimized only for that particular purpose.

3-D Imaging

In some cases, one may only be interested in very specific types of information, such as 3-D content from a scene. Examples of array systems comprising only two meta-optics exist, where one exhibits a PSF that is highly sensitive to depth, whereas the other is highly insensitive to depth. This approach leverages a PSF that rotates in plane as the point source distance varies, providing a direct readout of depth information. More classical depth from defocus approaches have also been demonstrated with meta-optics. In either case, the pairing of images from two different PSFs yields sufficient information to both reconstruct the image scene and to overlay a depth map, while relying on only a single snapshot of the scene with a dual aperture meta-optic.

Convolutional Optics for Information Processing

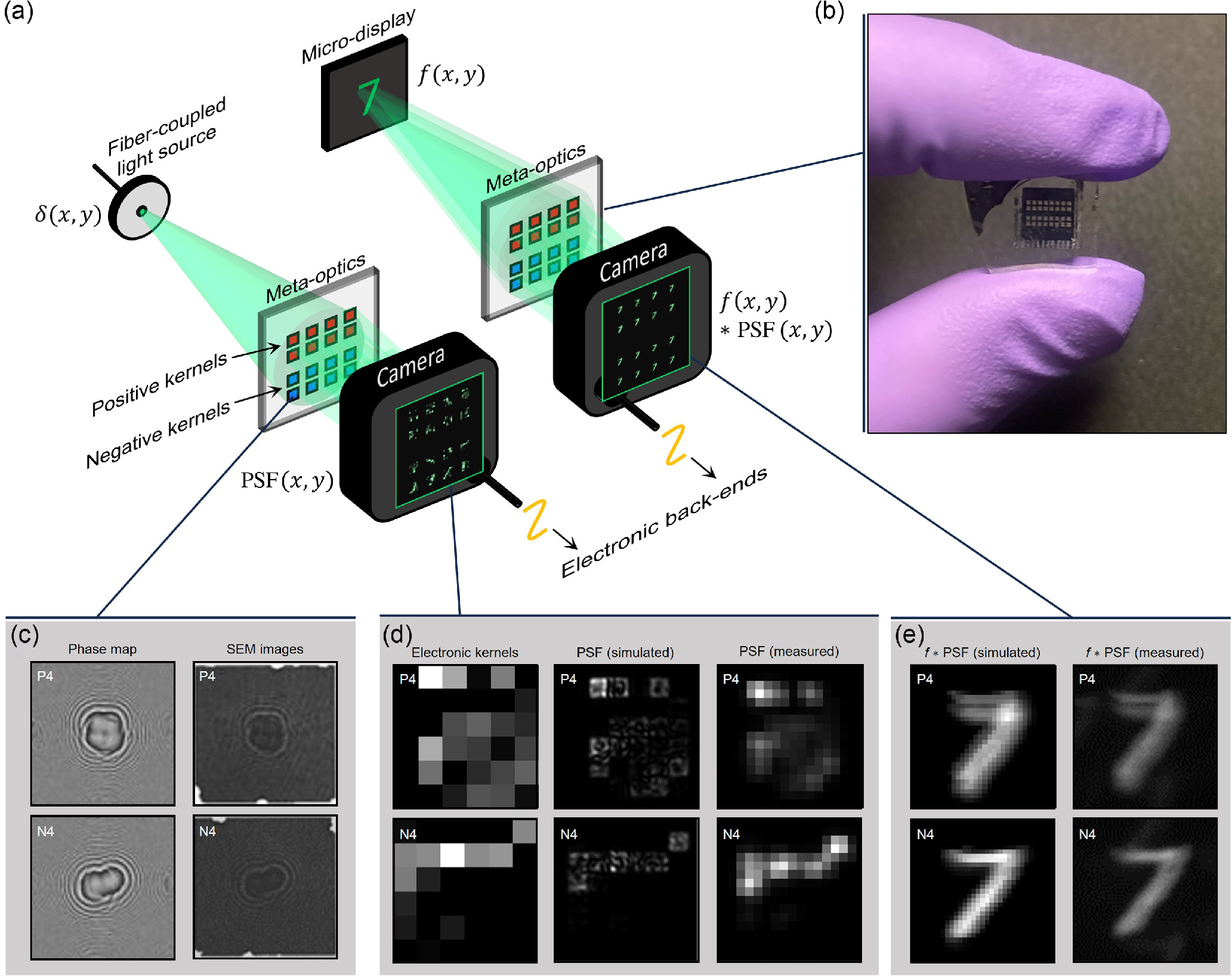

There are even more exotic cases, where extended arrays of dozens or hundreds of meta-optics have been fabricated adjacent to one another, each with a unique PSF designed to mimic a kernel of a convolutional neural network. Under a narrow field of view, the shift-invariant property of a PSF is maintained and as such, a meta-optic can perform a convolution directly on incident light, even for ambient incoherent light. This property has enabled early lab-based demonstrations of metasurface arrays that offloaded hundreds of convolutions that are typically performed digitally to the optical domain, leading to a reduction in the number of operations required for AI inference in an image classification neural network.

Multispectral or Hyperspectral Systems

Another example of an imaging array well suited to implementation with metasurfaces is a snapshot-based multispectral or hyperspectral camera. There are a number of architectures for achieving this, but in its most basic form this consists of an array of metalenses with their entrance pupils in the same plane as that of the nanostructures, that are then paired with a set of color filters on the sensor. In this type of design, each metalens will be unique and optimized for operation with its specific color filter. This approach will constrain the field of view because of the significant aberrations associated with these lens designs. Modifications to the lens design (e.g., by utilizing a telecentric meta-optic) can mitigate this, but the distortion associated with this approach must be taken into account as well. Overall, compared to existing hyperspectral snapshot systems, meta-optics can achieve must faster lens speeds.

A key challenge for any snapshot hyperspectral system is the color filter design and integration. There are numerous solutions for commercial color filters, but their customization and fabrication can be quite involved and are often expensive processes that are difficult to scale to high volumes in a manner that is amenable to sensor integration at low cost. In the academic community, researchers have demonstrated metasurface-based spectral filters and have also shown experimentally how these could be built monolithically on the same substrate as that of the metalenses. While this approach has a number of its own challenges in terms of fabrication complexity and yield, the architecture is promising in offering a single substrate that when paired with an existing monochrome sensor would form a hyperspectral camera.

Systems for Measuring Other Lightfield Parameters

Beyond spectral content and adjusting the MTF of individual lenslets, arrays can be generalized to determine other lightfield parameters (e.g., polarization and wavevector). These can be accomplished via sensor-side modifications, such as incorporating varied polarizers onto different subregions of a detector; however, meta-optics offer a compelling platform for these types of measurements in that their scatterer designs can be modified to directly discriminate or filter light before it reaches the sensor. Asymmetric scatterer cross sections can distinguish horizontal and vertical polarizations, and more complex meta-atom designs enable discriminating all the Stokes parameters characterizing the polarization of a wavefront.

Key Design Tradeoffs and Limitations

While array-based systems offer a number of interesting capabilities, enabling snapshot-based captures with individual optics optimized to extract specific information, there are some important design tradeoffs and limitations to keep in mind with these systems. With any array system with a fixed pixel count, the number of individual channels is going to drive the pixel count per channel. As more channels (i.e., more lenses) are added to the array, the spatial resolution will become coarser. In some cases this may not matter, but for many imaging systems, this would unacceptable as it would reduce the number pixels per degree and limit the resolving power of the system.

Instead of keeping the pixel count fixed, one could use a larger format sensor or use multiple sensors. In an application with a high power budget, and that is not overly cost sensitive, this may be acceptable, but again this is often unlikely the case. From a systems perspective, the sensor itself is often one of the most power-consuming components in a camera and power budgets can be quite tight, on the order of 100’s of mW in many consumer use cases.

Another challenge is when sensor customizations become necessary (e.g., by modifying color filters or needing to adjust the chief ray curve of the sensor). Sensor customization is not only challenging from a technical perspective, for the most part confining this type of development to the cleanrooms of state-of-the-art sensor manufacturers but are often cost prohibitive. Sensor manufacturers are often quite reluctant to customize their products for a new use case unless that use case has a very well justified business case that could substantially contribute to their bottom line—the costs and time required for development of a custom process can be extremely high and the potential return on investment must warrant the risk.

Summary

In summary, array-based imaging systems offer the ability to capture information beyond what you can collect with a monocular camera design and meta-optics offer a compelling platform for implementing these systems because of their monolithic nature and their lightfield manipulation capabilities through complex scatterer designs. Key use cases include multispectral and hyperspectral imaging, 3-D imaging, optical information processing using convolutional optics, and lightfield cameras. While array-based meta-optical cameras offer several unique advantages compared to monocular systems, there are a number critical design tradeoffs to consider. Sensor customizations are often necessary or modifications to the system design (e.g., to adapt color filters, or to integrate additional digital processing to synthesize information from the frames of individual lenslets). At a systems level, if the unique advantages of an array-based system can overcome the challenges with sensor integration (or potentially customization) and any impacts on form factor and power budget are tolerable, then these architectures offer a powerful, snapshot-based imaging modality.

References

Anna Wirth-Singh, Jinlin Xiang, Minho Choi, Johannes E. Fröch, Luocheng Huang, Shane Colburn, Eli Shlizerman, and Arka Majumdar “Compressed meta-optical encoder for image classification,” Advanced Photonics Nexus 4(2), 026009 (25 February 2025). https://doi.org/10.1117/1.APN.4.2.026009

McClung, Andrew, et al. “Snapshot spectral imaging with parallel metasystems.” Science advances 6.38 (2020): eabc7646.

Greengard, Adam, Yoav Y. Schechner, and Rafael Piestun. “Depth from diffracted rotation.” Optics letters 31.2 (2006): 181-183.

Colburn, Shane, and Arka Majumdar. “Metasurface generation of paired accelerating and rotating optical beams for passive ranging and scene reconstruction.” Acs Photonics 7.6 (2020): 1529-1536.

Guo, Qi, et al. “Compact single-shot metalens depth sensors inspired by eyes of jumping spiders.” Proceedings of the National Academy of Sciences 116.46 (2019): 22959-22965.

Lin, Ren Jie, et al. “Achromatic metalens array for full-colour light-field imaging.” Nature nanotechnology 14.3 (2019): 227-231.

Arbabi, Ehsan, et al. “Full-Stokes imaging polarimetry using dielectric metasurfaces.” Acs Photonics 5.8 (2018): 3132-3140.

Rubin, Noah A., et al. “Matrix Fourier optics enables a compact full-Stokes polarization camera.” Science 365.6448 (2019): eaax1839.